Maximizing Collective Intelligence Means Giving Up Control

Today marks the 45th anniversary of the Mother of All Demos, where technologies such as the mouse and hypertext were unveiled for the first time. I wanted to mark this occasion by writing about collective intelligence, which was the driving motivation of the mouse’s inventor (and my mentor), Doug Engelbart, who passed away this past July.

Doug was an avid churchgoer, but he didn’t go because he believed in God. He went because he loved the music.

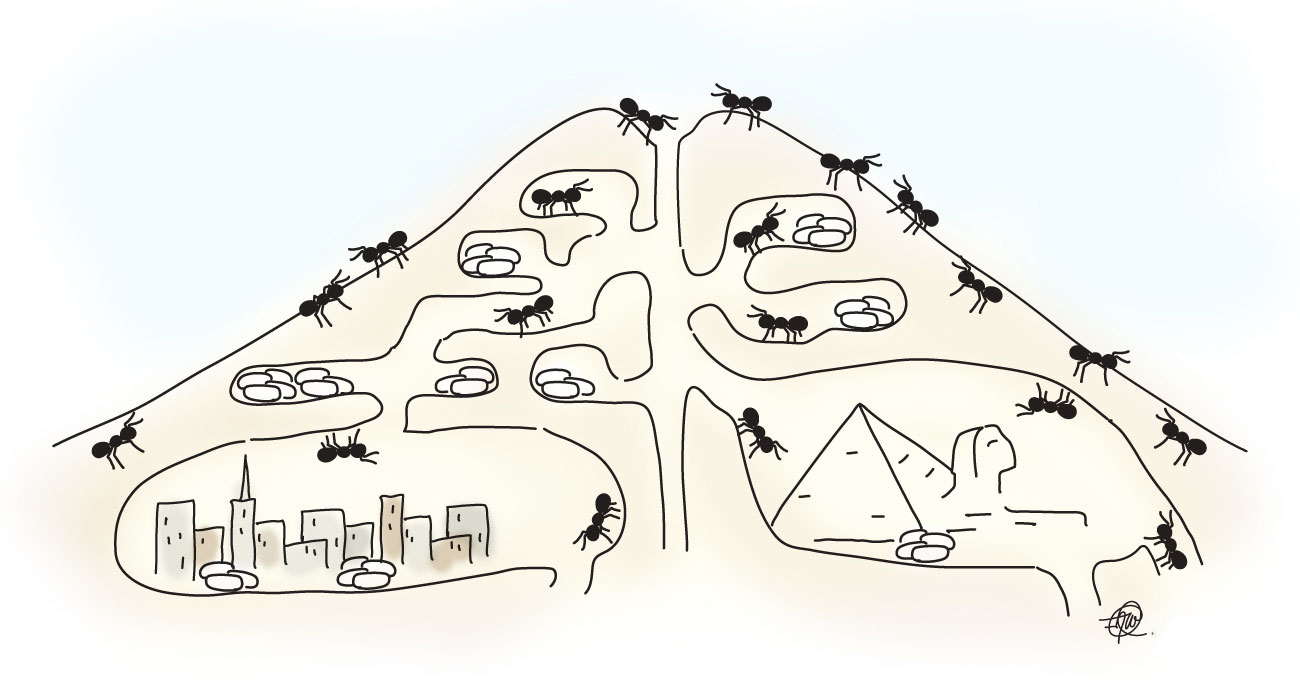

He had no problem discussing his beliefs with anyone. He once told me a story about a conversation he had struck up with a man at church, who kept mentioning “God’s will.” Doug asked him, “Would you say — when it comes to intelligence — that God is to man as man is to ants?”

“At least,” the man responded.

“Do you think that ants are capable of understanding man’s will?”

“No.”

“Then what makes you think that you’re capable of understanding God’s will?”

While Doug is best known for what he invented — the mouse, hypertext, outlining, windowing interfaces, and so on — the underlying motivation for his work was to figure out how to augment collective intelligence. I’m pleased that this idea has become a central theme in today’s conversations about collaboration, community, collective impact, and tackling wicked problems.

However, I’m also troubled that many seem not to grasp the point that Doug made in his theological discussion. If a group is behaving collectively smarter than any individual, then it — by definition — is behaving in a way that is beyond any individual’s capability. If that’s the case, then traditional notions of command-and-control do not apply. The paradigm of really smart people thinking really hard, coming up with the “right” solution, then exerting control over other individuals in order to implement that solution is faulty.

Maximizing collective intelligence means giving up individual control. It also often means giving up on trying to understand why things work.

Ants are a great example of this. Anthills are a result of collective behavior, not the machination of some hyperintelligent ant.

In the early 1980s, a political scientist named Robert Axelrod organized a tournament, where he invited people to submit computer programs to play the Iterated Prisoner’s Dilemma, a twist on the classic game theory experiment, where the game is repeated over and over again by the same two prisoners.

In the original game, the prisoners will never see each other again, and so there is no cost to screwing over the other person. This changes in the Iterated Prisoner’s Dilemma, which means there’s now an incentive to cooperate. Axelrod was using the game as a way to try to understand the nature of cooperation more deeply.

As it turned out, one algorithm completely destroyed the competition at Axelrod’s tournament: Tit for Tat. Tit for Tat followed three basic rules:

- Trust by default

- The Golden Rule of reciprocity: Do unto others what they do unto you.

- Forgive easily

Axelrod was intrigued by the simplicity of Tit for Tat and by how easily it had trounced its competition. He decided to organize a followup tournament, figuring that someone would figure out a way to improve on Tit for Tat. Even though everyone was gunning for the previous tournament’s winner, Tit for Tat again won handily. It was a clear example of how a set of simple rules could result in collectively intelligent behavior, highly resistant to the best individual efforts to understand and outsmart it.

There are lots of other great examples of this. Prediction markets consistently outperform punditry when it comes to forecasting everything from elections to finance. Nate Silver’s perfect forecasting of the 2012 presidential elections (not a prediction market, but similar in spirit) was the most recent example of this. Similarly, there have been several attempts to build a service that outperforms Wikipedia by “correcting” its flaws. All have invoked the approaches people took to try to beat Tit for Tat. All have failed.

The desires to understand and to control are fundamentally human. It’s not easy to rein those instincts in. Unfortunately, if we’re to figure out ways to maximize our collective intelligence, we must find that balance between doing what we do best and letting go. It’s very hard, but it’s necessary.

Remembering Doug today, I’m struck — as I often am — by how the solution to this dilemma may be found in his stories. While he was agnostic, he was still spiritual. Spirituality and faith are about believing in things we can’t know. Spirituality is a big part of what it means to be human. Maybe we need to embrace spirituality a little bit more in how we do our work.

Miss you, Doug.

Artwork by Amy Wu.

Eugene, thanks for sharing. Anecdote. Recently I was speaking with a few folks about remote working models, which is totally the norm and accepted within my group (Monitor Institute). The folks I was speaking with were skeptical. The main reason? They were afraid that others would get distracted at home, and that productivity would decrease as a result / that project success would be compromised. I mention this anecdote because of the problem these folks raised, of the worker ant that wants to protect the nest (but isn’t built for the job), or the rogue ant that wants to sunbathe all day, or the ant that prefers to gather food when something is attacking the nest (because it is too focused on its task and doesn’t see the big picture). Not sure where I’m going with this beyond the question of whether total self-organization / lack of centralized control can work when people aren’t on the same page / don’t have the same goal in mind…

Thanks for sharing, @jessausinheiler:disqus! I suspect what you’re describing is something that many people are grappling with in their organizations. It’s also very much command-and-control thinking — a set of assumptions about how or why things are currently working and a projection as to why things cannot work in a different circumstance. All of the fears you mention are valid possibilities, but they are also valid in a face-to-face environment as well. I assume that you don’t currently have monitors watching everyone work throughout the day, making sure that no one is ever distracted and that productivity is always at the maximum level, right?

Are individuals accountable because they think others are constantly physically observing them, or are they accountable because they have a set of deliverables to which they have committed? If it’s the latter, then would working remotely negatively affect their ability or desire to do their work?

Maybe so! The key is to think in terms of hypotheses rather than assumptions, and then to test those hypotheses before jumping to conclusions. That said, we have enough data on remote work to know that a lot of these assumptions about remote work do not generally hold true.

I wrote a blog post last year entitled, “The Illusion of Control,” that you might find relevant and interesting:

http://groupaya.net/blog/2012/06/the-illusion-of-control/

Jess your comment reminds me of an interesting book that I got directed to recently called Looptail tells the story of a company who struggled with this exact thing… http://www.amazon.com/Looptail-Company-Changed-Reinventing-Business/dp/1455574090/ref=sr_1_1?ie=UTF8&qid=1387843999&sr=8-1&keywords=looptail

To me there’s a link too between freeing the power of collective intelligence and choosing trust (e.g. not controlling)… One example the founder gave was how the company originally had a policy of punishing all employees based on the vagrant few re: Facebook use… because a few were abusing, they locked everyone out. They later realized though this was a really bad approach as the value of a sense of autonomy and trust far outweighs the costs of the few loafers who inevitably take advantage. Making policies based on fear and control is less effective for thriving (and productivity), and yet as we all know so much less common as the instinct to control is so seemingly innate in our culture at least! Eugene I love your point that making space for that “spiritual” sense of humility and knowing how much we can’t know as a really key step toward loosening the reins in work culture to release the intelligence and engagement of the collective (and individuals, as we’re much happier with autonomy and a sense of higher purpose & opportunity for development in our work as well (www.ted.com/talks/dan_pink_on_motivation.html)

I think giving up control makes a lot of sense under the right circumstances. In fact, I’d love to see a controlled study along these lines – take two similar groups of people, make one hierarchical and another “self-organizing,” and measure the difference in results for some task.

As for giving up on understanding these effects, that idea I’m less fond of. Complex phenomena like markets work brilliantly sometimes and fail at others. We need to try to understand them in order to design better mechanisms over time. Maybe we can’t control them, but we can become better watch-makers.

To Jess’ point, I agree that culture and group norms are a huge factor in enabling less control-driven organizations. Everyone should have a well-grounded opinion on what needs to be done rather than being told.

I overstated things when I suggested that we give up on understanding. What I meant is to watch out for cognitive traps. Specifically, don’t confuse a convenient narrative with “understanding,” and don’t confuse “understanding” with the ability to control or replicate.

I totally agree. I think there’s much more “understanding” out there than true understanding, myself included.

Great debate to start. It’s not just about control is it – we function through esteem systems in most areas of endeavor so while some people want to capture collective intelligence to do more control, many people involved in collective systems also want to build, participate in and reinforce esteem systems that give people more power. There’s a trend towards saying complex human activity is adaptive rather than rational or planned – we have a big debate brewing but the non-adaptive camp doesn’t have the same public esteem as the others right now – one cost of losing peer review.

Thanks for your comment, Haydn, and welcome to my blog! If we reframe power to be goal- rather than ego-centric, I think this resolves what you’re calling “esteem” issues. I will never be able to lift a car by myself, no matter how much I work out or how many PEDs I take. However, I can lift a car with ten other people. Being part of that group makes me feel more powerful than I can ever feel individually.

Of course, having the power to dictate what others do in that group might make me feel even more powerful. Again, I would hope that being goal-centric would help address this by demonstrating that a group where power is distributed is more effective than a group where power is centralized. A classic example of this would be Jimmy Wales deciding not to try to exert control over Wikipedia. That “power” would have been fool’s gold, because Wikipedia wouldn’t have become the phenomenon that it did. Now Jimmy hangs out with Bono and has his picture plastered all over Wikipedia come fundraising time, so I think the “give up control” approach ended up working out better for him from an “esteem” standpoint. 🙂